Is Augmented Intelligence the AI of the future?

In the past, artificial intelligence (AI) was often portrayed as one that could one day replace humans. Today, it is assumed that this will not be the case in the foreseeable future, nor should it be. That is why we now talk about augmented intelligence instead of artificial intelligence. For a long time, the goal of artificial intelligence was to completely replace humans for many tasks. For example, the field was described as follows [1]: “The art of creating machines that perform functions that require intelligence when performed by people.” (1990) [2] “The study of how to make computers do things at which, at the moment, people are better” (1991) [3] This approach aims to create computer programs that can do not only repetitive tasks, but also tasks that require intellectual performance from a person. The Turing Test [4], developed by Alan Turing in 1950, provides an operational definition of artificial intelligence. A user interacts with a computer program in written form and asks questions. If, after completing this test, the user is unable to distinguish whether the answers came from a computer or a person, the Turing Test is considered passed. In recent years, however, the question has often arisen as to whether such artificial intelligence is at all purposeful and desirable.

Digital ethics

There are a number of reports that suggest that the sources used for training software are not always fair. For example, it has been shown that there are differences in how women or men are described in Wikipedia articles [5] [6]. Researchers in the USA were able to show that for years an analysis programme was used to calculate the risk of offenders, which disadvantaged the African-American population [7]. At a large tech company, it was shown that software designed to facilitate the hiring process for new employees was unfair to women [8]. There are many more examples, and the topic of digital ethics has made it into the mainstream media due to these numerous scandals. It is therefore necessary for the digital society of the future to deal with how the cooperation between software and humans should look like. Humans and computers have complementary abilities: Computers are very good at processing large amounts of data in the shortest possible time or performing calculations efficiently. In contrast, they are not capable of reflecting or morally questioning decisions. There are therefore simply certain activities that a computer cannot do, and therefore should not do.

When voice assistants discriminate

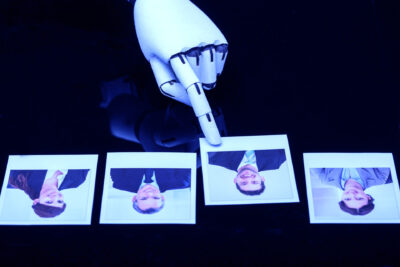

Because of this discrepancy in capabilities, the role of artificial intelligence needs to be rethought. We therefore often use the term augmented intelligence instead of artificial intelligence. Behind this is the idea that the computer serves as a tool for humans and augments human intelligence, but does not replace humans [9]. A typical example of such collaboration is a voice assistant, typically found in smartphones. When we ask it to offer us restaurants nearby, the voice assistant does not make the decision where we will eat, but provides us with the information needed to make such a decision. Does this free us from the problems of digital ethics? No, because it is up to us, the human being, to make the decision and bear the responsibility for it. Therefore, it is also up to us to critically question the data provided and to include this reflection in the decision. In the restaurant example, for example, it could be that a certain restaurant, although much closer than the others, was not offered to us at all. So, even in the context of augmented intelligence, we cannot avoid actively and regularly engaging with the generated data and decision suggestions of our tools.

Ethics must be programmed in

The challenge of the next few years is to integrate this new form of collaboration into the processes of software development and application, with all the necessary measures to prevent and control the associated risks in the area of ethics and discrimination. Appropriate processes must be planned, in the project itself, and also regularly during the operation of the software. The concrete issues to be evaluated are not uniform due to the many different areas of application and also the different technologies (video, audio, text, etc.) and different types of problems (forms of discrimination, unethical decisions) and must be specified and evaluated in each project, analogous to a traditional risk management. In the concept of augmented intelligence, the human being takes responsibility and therefore has the active task of reflecting on and critically questioning the machine’s decision-making proposals. Only in this way are we equipped for successful cooperation between humans and machines in the digital society of the future.

References

- 1] Russell, S. & Norvig, P., 2010. Artificial Intelligence – a modern approach. Upper Saddle River (New Jersey): Pearson.

- 2] Kurzweil, R., 1990. The Age of Intelligent Machines. s.l.:MIT Press.

- 3] Rich, E. & Knight, K., 1991. artificial intelligence (Second Edition). s.l.:McGraw-Hill.

- 4] Turing, A. M., 2004. the essential turing. s.l.:Oxford University Press.

- [5] Wagner, C., Graells-Garrido, E., Garcia, D. & Menczer, F., 2016. Women through the glass ceiling: gender asymmetries in Wikipedia. EPJ Data Science, 5(1).

- 6] Jadidi, M., Strohmaier, M., Wagner, C. & Garcia, D., 2015. It’s a man’s Wikipedia? Assessing gender inequality in an online encyclopedia. s.l., s.n.

- [7] Larson, J., Mattu, S., Kirchner, L. & Angwin, J., 2016. how we analysed the COMPAS recidivism algorithm. ProPublica, May.

- [8] Jeffrey, D., 2018. Amazon scraps secret AI recruiting tool that showed bias against women, San Fransico, CA: Reuters.

- [9] https://digitalreality.ieee.org/publications/what-is-augmented-intelligence

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!