Dangerous mathematics or dangerous humans? – Opportunities and risks of an algorithmised world

The topic of artificial intelligence (AI) has potential for discussion. On 23 March 2021, experts from the BFH Centre Digital Society discussed how rational data-based decisions are and how we can distinguish useful from dangerous AI applications. Read a short summary of the event here.

Fig.1: The so called Schachtürke.

The so-called Schachtürke (1769), a chess-playing automaton developed by Wolfgang von Kempelen (Figure 1), impressed the Empress of Austria more than 200 years ago with its supposed artificial intelligence. However, behind the chess Turk is neither magic nor artificial intelligence, but a human being inside the machine. It all started with this trick, says Heinrich Zimmermann, lecturer & coach at BFH Wirtschaft, until the reigning world chess champion was beaten by IBM’s AI chess machine in 1997. From then on, it was clear that machines can be stronger than humans. This is not necessarily a problem when playing chess – but what about a simulated psychotherapy conversation? Can computers be attributed too much power? Joseph Weizenbaum, a German-American computer scientist, criticises AI systems, for example, when he notices that assistants start having serious conversations with the simulator. There is a danger that computers are overestimated and attributed abilities that they do not have, says Heinrich Zimmermann. That’s why it’s important to realise that humans are not only inside the shaft Turk, but are also part of today’s artificial intelligence: humans build the system, repair and maintain it, or the data and programmes. “Without humans, an artificial intelligence system is not even possible.”

How AI discriminates

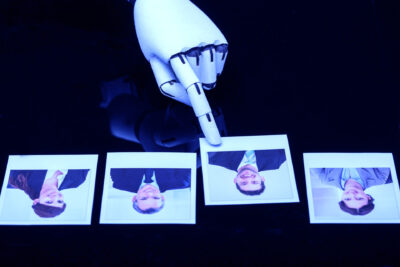

Mascha Kurpicz-Briki, a computer scientist at BFH Technik & Informatik, stirred up the participants with negative examples of AI applications: an application for a passport photo upload displays the error message that the eyes are closed for people of Asian origin. The translation tool Google Translate provides gender-stereotypical translations for languages with gender-neutral pronouns (see Figure 2). The risks of AI include discrimination based on origin or gender. Mascha Kurpicz-Briki explains: The reason for discriminatory AI systems is the training data with which the system learns to make decisions. This already contains stereotypes from our society and reinforces the prejudices that already exist. Another danger is the use of AI for things that are not compatible with society’s morals (e.g. fully automated weapons).

Fig.2: Google Translate provides gender-stereotyped pronouns.

In Mascha Kurpicz-Briki’s contribution, we not only see problems of AI applications, but also learn that AI can open up new opportunities and support humans in doing their tasks. The Witty Works Diversifier (co-funded by Innosuisse) reduces discrimination in job advertisements, another application recognises hate speech on the internet and reports it to the community. Thanks to AI, even our oceans are becoming cleaner again.

Mascha Kurpicz-Briki emphasises that the technology is not fundamentally good or bad, but that the use case plays the role. Instead of Artificial Intelligence, she prefers to speak of Augmented Reality – because the moral and ethical decision and responsibility always remain with the human being.

A holistic approach to regulation

In the third presentation of the evening event, Reinhard Riedl discusses how algorithms can be regulated so that the use of algorithms is in line with social goals. Computational thinking emerged as early as 6000 years ago, says the head of the BFH Centre Digital Society, and soon the desire arose to build computing machines to eliminate human error. The need for regulation arose from the interaction of mathematical innovations and the exponential growth of computing speed, he says. With the progressive application of computational thinking, transparency grows and shrinks at the same time, according to Riedl, – a dynamic imbalance between opportunities and threats emerges. The new options and new insights are contrasted by misuse and the incomprehensibility of the new tools.

Data protection neither works nor is it sufficient, Riedl sums up. Actors have profiles of us without us being aware of it. In his contribution, Riedl pleads for a new holistic approach and presents a framework with seven fields of action. “We should not only regulate the algorithms themselves, but consider them integrated in the package with the criteria, computational thinking and design of the interface to humans as the object for regulation. Generic regulation is not possible, contextual regulation is needed that looks not only at the effect, but also what narratives are used.”

The discussion with the speakers following the three contributions revolves around the development of fair chatbots, possible solutions to discriminatory translations and the question of regulating algorithms. It remains uncertain what and who should actually regulate. Jonas Pfister, PhD student of Prof. DDr. Erich Schweighofer at the University of Vienna, examines the topic from a legal perspective and emphasises that partial automation, i.e. the use of AI in the sense of augmented intelligence, must also be legally regulated (automation is regulated via GDPR Art. 22). Because the question is: Is it still legally compliant if humans only have a short time to make an AI-assisted decision?

With more open questions than answers, we end the virtual exchange and look forward to further discussions.

Video recording

Here you can watch the video recording of the event.

About the event

The event of the BFH Centre Digital Society was originally planned for spring 2020. It could now take place virtually via MS Teams on 23 March 2021. We thank our cooperation partners:

![]()

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!