Diffusion Models: A New Horizon in Image Generation

As the curtain fell on last year, most conversations about generative AI in public forums and the media orbited around text generation, with names like chatGPT stealing the limelight. But the reality is that the transformative power of Artificial Intelligence, spearheaded by deep learning, extends far beyond the realm of words. A standout example is image generation, which has become a vibrant arena of exploration and innovation in the world of AI.

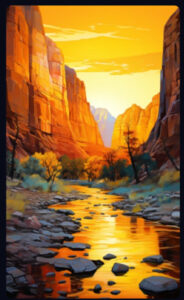

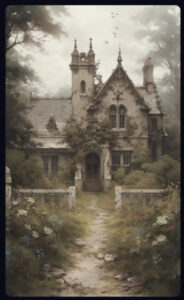

These AI-driven tools, known as generative models, have taken image creation to new frontiers, crafting images that are not only realistic but often captivating in their details. They have even ventured into the domain of art and design, pushing the boundaries of what is possible with technology.

In the lineup of these image generative models, a few notable names come to the fore: Variational Autoencoders (VAE), Flow-based models, and Generative Adversarial Networks. But among these players, one has been quietly making waves due to its unique capabilities – the diffusion model.

So, if you’re intrigued by the idea of AI models capable of creating unique and realistic images, here is an introduction to the world of diffusion models and their applications in image generation.

The Science Behind Diffusion Models

Diffusion models are a type of AI that creates new things, like pictures, by imitating a process similar to how tiny particles randomly move around in a fluid. This might sound complex, so let’s break it down.

Imagine you have a clear picture. The diffusion model’s job, at first, is to gradually add random visual “noise” to the image until what you have left is just random, messy speckles, like static on a TV screen. The transformation from a clear picture to this noisy static is guided by a set of rules known as a stochastic differential equation (SDE).

To generate a new image, this process is reversed. Starting with a sample from the noise distribution, the model applies the reverse of the SDE to transform the noise back into a meaningful image.

How does it know how to do that? Well, during its training, the model learns how to go from noise to a meaningful image by continually trying to minimize the difference between its newly created images and the actual images from its training dataset.

So, in a nutshell, diffusion models generate new images by first turning clear images into noise and then skillfully turning that noise back into clear, meaningful images.

Why are Diffusion Models Important?

Diffusion models are proving themselves to be game-changers because of their ability to create impressive, high-quality images while also providing fine-grained control over the creation process. Unlike their counterparts, GANs (Generative Adversarial Networks) or VAEs (Variational Autoencoders), diffusion models come with a clear, well-structured training goal, which helps to prevent common issues, such as mode collapse, that other generative models often struggle with.

What sets diffusion models apart is their step-by-step nature, which conveniently allows for the generation of images at various resolutions. This versatility is incredibly valuable in tasks like enhancing image resolution or filling in missing parts of an image. The images created by these models are not only of exceptional visual quality, but they also offer exciting possibilities across a range of sectors, including video games, virtual reality, and even the film industry.

Popular diffusion models

- OpenAI’s Dall-E 2[1]

- Google’s Imagen[2]

- StabilityAI’s Stable Diffusion[3]

- Midjourney[4]

In conclusion, diffusion models, with their unique image generation capabilities, are carving out a niche in the field of artificial intelligence. As research continues, we can expect to see even more impressive, and diverse applications of these models in the near future. They signify yet another step forward in the continuous journey of machine learning and AI, painting a promising picture of what lies ahead.

References

[1] https://openai.com/dall-e-2

[2] https://imagen.research.google/

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!