How to detect and reduce bias in AI systems

Bias is a major cause of unfair and discriminatory decisions in the use of AI systems. For example, well-paid job offers were algorithmically placed almost exclusively with male users [1]. Using an awareness-raising framework to raise awareness among developers and users, the goal is to identify and reduce bias. The framework has been successfully validated in the context of two real-world AI systems.

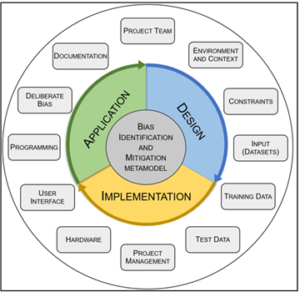

With the spread of AI systems in more and more areas of life, the problem of bias is also increasing. Inherent biases in the thinking and actions of developers and operators, but also biases in training data sets for AI systems, reinforce their discriminatory decisions. Based on an extensive literature review [2], an awareness-raising framework was developed to sensitise the people involved in order to identify and reduce bias. In contrast to the research object under investigation, there are technical frameworks that attempt to reveal discriminatory patterns in training data [3]. Biases come in different guises: “direct bias” refers to features at the core of an AI system, such as inappropriate training data and self-learning algorithms based on it [4]. In the example mentioned at the beginning, the historically biased data with which the system was fed lead to correspondingly discriminatory system decisions. “Indirect bias, on the other hand, results from the surrounding ecosystem, for example through the adoption of traditional approaches and attitudes [5]. In the present research project, the entire life cycle from system design to implementation to operation is included in the framework in a holistic approach. A subdivision into 12 categories allows a structured approach to the application of the framework (see Fig. 1).

Figure 1: The bias mitigation framework focuses on 12 categories (rectangles).

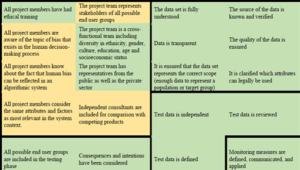

Although ethical aspects are frequently addressed in AI projects [6], it usually remains with references to general ethical principles [7]. In the present approach, a more sustainable expansion in two dimensions is recommended. On the one hand, detailed questions per category are used to trigger targeted project-specific reflection. Figure 2 shows this checklist-based approach using the category “Project Team” as an example.

Figure 2: Excerpt from the checklist for the category “Project Team” (complete in [2]).

On the other hand, through regular application and systematic documentation (see Figure 3), ethical aspects are sustainably anchored as a mandatory standard in AI projects. The proposed framework was successfully validated in the context of two practice-relevant AI projects: the chatbot of a Swiss insurance company and the Smart Animal Health project of the Swiss Federal Office for Agriculture. In particular, the documentation in the form of a one-pager allows a clear presentation of a framework application. Figure 3 shows the result of the above-mentioned chatbot project. The green areas were recognised or taken into account in the project, the yellow areas only partially. White areas would indicate blind spots or inapplicable criteria regarding bias in the respective AI project, which did not occur in the chatbot project.

Figure 3: Excerpt from the one-pager for the chatbot project (complete in [2]). Bordered areas correspond to a category of the framework, containing the criteria of the respective checklist.

Several findings resulted from the application of the framework in the two AI applications mentioned above. On the one hand, the framework was improved in a validation step in terms of applicability, usefulness and coverage of relevant categories. On the other hand, the projects involved benefited in particular from the high degree of coverage of the bias mitigation framework.

About the project

The project results were awarded a Best Paper Award at a conference [8] and published in the International Journal on Advances in Software published [2]. Applicable templates are available.

References

- D. Cossins, “Discriminating algorithms: 5 times AI showed prejudice,” New Scientist, 2018. https://www.newscientist.com/article/2166207-discriminating-algorithms-5-times-ai-showed-prejudice/

- T. Gasser, R. Bohler, E. Klein, L. Seppänen, “Validation of a Framework for Bias Identification and Mitigation in Algorithmic Systems.” International Journal on Advances in Software, 14(1&2), pp. 59-70. IARIA. Dec 2021. https://arbor.bfh.ch/16560/

- K. R. Varshney, “Introducing AI Fairness 360, A Step Towards Trusted AI,” IBM Research Blog, 2018. https://www.ibm.com/blogs/research/2018/09/ai-fairness-360/

- W. Barfield and U. Pagallo, “Research Handbook on the Law of Artificial Intelligence. Edited by Woodrow Barfield and Ugo Pagallo. Cheltenham, UK,” Int. J. Leg. Inf., vol. 47, no. 02, pp. 122-123, Sep. 2019.

- B. Friedman and H. Nissenbaum, “Bias in computer systems,” ACM Trans .Inf. Syst., vol. 14, no. 3, pp. 330-347, Jul. 1996.

- SAS, “Organizations Are Gearing Up for More Ethical and Responsible Use of Artificial Intelligence, Finds Study,” 2018. https://www.sas.com/en_id/news/press-releases/2018/september/artificial-intelligence-survey-ax-san-diego.html

- AlgorithmWatch, “AI Ethics Guidelines Global Inventory,” 2019. https://algorithmwatch.org/en/project/ai-ethics-guidelines-global-inventory/

- T. Gasser, E. Klein, and L. Seppänen, “Bias – A Lurking Danger that Can Convert Algorithmic Systems into Discriminatory Entities,” in Centric2020 – The 13th Int. Conf. on Advances in Human-oriented and Personalized Mechanisms, Technologies, and Services, 2020, pp. 1-7. https://arbor.bfh.ch/13189/

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!