About the mainframes of the Iron Age – 60 years of digital transformation, part 2

Chuck and Ken, a father-son team of computer experts, take an entertaining look back at their own six decades in the IT industry. In the 2nd part of the mini-series, they describe how the volumes of data from punch cards were converted into usable information and what role the first mainframe computers played. Their anecdotes complete the picture of how these first digital transformations went.

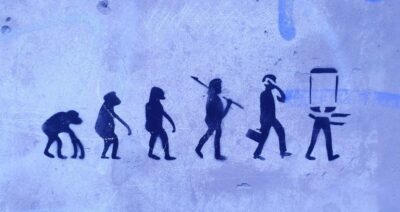

Digital transformations don’t just spontaneously happen; very often they are sold. New data means new processes; new processes mean new technology; and new technology means salespeople to sell it. Today everyone on the buying side is digitally aware, hence salespeople today must have more technical knowledge than their customers; look at their backgrounds, and increasingly you’ll find college-educated IT professionals in the highest-tech sales roles.

This was not always the case. The further back in time we look, the less technically educated the key players were. The sales process did not require a lot of digital expertise, and certainly the first salespeople did not know about things like programming.

“The 1960’s was the “Age of Iron” when it came to computers, and the giants – IBM, Univac, Honeywell – “pushed iron.” They sold computers to companies, provided programming schools for the companies’ programmers and – well, that’s about it. Each company had to program them, integrate them, and test them to make sure they worked.

The sales process was not yet about engineers carrying on high level dialogues – it was the same “sleight of hand” as the automobile business. The target was a VP in charge of IT; rarely an engineer or programmer, these were the finance or accounting leaders since that’s where the tab machines lived. The sales demos were meant to be awesome. When I worked for major vendor, we competed with IBM’s 1400 series, the industry best-seller. But IBM’s weakness: it could only do one thing at a time. Our model had “background-foreground,” not multitasking as we know it today, but at least two major functions simultaneously.

So, in our well-rehearsed pitch, the salesman would call me to the demo, and the customer would yawn as I’d start up a 4-tape sort – everyone knew these took a looooong time, and everyone hated wasting time. Then – SURPRISE! – I’d have our machine start up a complex print job in parallel. Two major tasks at the same time – MAGIC! Where do we sign?” (Chuck Ritley)

Features need trained people

Today, particularly for product-oriented companies there is often quite some sophistication in the selection of new features. The complexity can be large, and good alignment between product teams can be essential, e.g., PI-Planning. Additionally, quantitative industrial approaches such as weighted shortest job first (or WSJF) can be helpful in decision making. But these both require trained experts who understand the technical details and the Big Picture. A recent study suggested overwhelming that “investments in people skills” are central to successful digital transformation projects today. But was this always the case?

“Here’s how it worked. Setting up a mainframe was not easy. It required a huge expenditure in workhours. This was the era before commodity systems, so every program was custom built for the company’s needs. This required two major up-front decisions: what to automate first, and who was going to do it?

The “what” might go up to the CEO level, at least for a blessing. Later commodity systems, for example MRP or DRP, forced inventory setup first. But in the Age of Iron that was not true. If the CEO of a distribution organization had a strong and reliable supply chain, the decision might be to automate sales reporting first. In my time at a large vendor, sales was often the very first task.

But if sales are first, what’s next? And the step after that, and so on. Someone had to lay out a systems plan showing how all data would connect to become usable information. Who in the company can prioritize the progress? At least in my experience upskilling was not a common practice; the staff at companies were busy with their own work, so it made sense to bring in new data processing staff from the outside.

Today, there is no shortage of product owners or enterprise architects, but back then the manufacturer’s field support representatives would help as part of a package deal. For example, as a programmer/analyst I worked closely with the company’s new hires, often training them. Ideally, this process would begin at least 3 to 6 months before any other transformation work. And because the world was not yet flat, this often meant long time spent at the manufacturer’s facility for access to training machines. Under the guidance of the manufacturer’s representative (me), together we’d develop the system roadmap and information flow and present it to their management for approval. IBM took this one step further with their Value Added Reseller (VAR) program; each VAR was the industry expert both for a business type and a geographic area. They did the selling and the installation; IBM provided the hardware.

So digital transformation in the Age of Iron was about transforming the company one system at a time. And to do this, we needed to transform the new digital workers, one person at a time – quite literally, giving them deep training in a proprietary technology. End-to-end this could take nearly a year.” (Chuck Ritley)

The parallel universe and Big Bangs

Software testing today is a well-established engineering discipline, with approaches (whitebox, blackbox, etc.), techniques (test automation, etc.), requirements (traceability, coverage, etc.) and tools. In some markets such as India the engineering colleges turn out thousands of software test engineers, working at companies that often take a factory-approach to testing. Chief enablers of this are a low barrier to assembling the needed test data, and a high throughput rate.

The key point, though, is that fast and thorough testing enables Big Bang rollouts, one of the classic hallmarks of digital transformation projects today. Existing business processes and the applications that support them are ripped out and – in what is called a Big Bang – replaced with new processes and systems. So, how were the earliest programs tested? And what did the rollouts look like?

“We didn’t have these enablers in the Age of Iron. But there was no shortage of reasons for testing. A manual business process could be digitally transformed. Or later, as mainframes themselves were replaced with newer mainframes, it was necessary to re-code the programs into the new language – we called this translation. Think about it: a single program execution could easily involve ten thousand punch cards and require many hours.

We did have some basic tools – especially for translation – but the main technique we used for quality control was called parallel running. With parallel running the new mainframe was set up, and at the beginning of an accounting period, the same data would be fed into it as the original system or the mainframe we were replacing. At the end of the month, they were compared. If everything worked, all figures were identical. But depending on the nature of the data, sometimes a bug would only appear in a data set after many weeks. For the manufacturers’ reps like me, a good bug-free run meant the month-end was an excuse for a party.

What hasn’t changed is the article I wrote in the 1970’s: for successful systems, first debug the people problem. Introducing a new system brings with it a whole new set of problems – people problems. It’s not about how their tasks will change, but about their role in the new world, where and how much value they will provide, and their fears and concerns about the future.” (Chuck Ritley)

Miniseries on digital transformation

This article is the second of a 4-part mini-series on digital transformation. Part 3 will be published next week.

Acknowledgements

It is our pleasure to thank Maria Kreimer and Nikola Gaydarov for detailed discussions that contributed significantly to this manuscript.

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!