Handling personal data according to the BGEID – Part 2

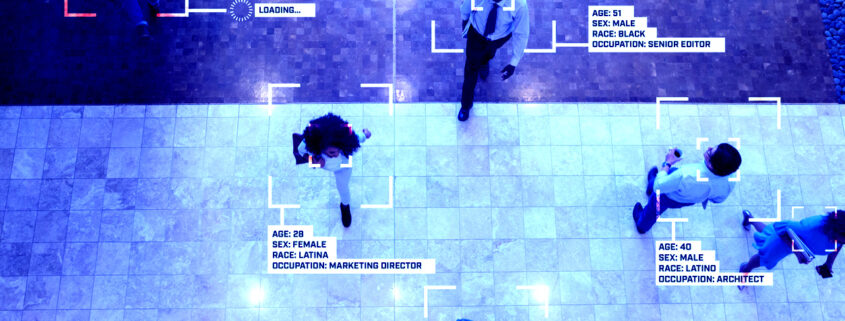

On 7 March 2021, a vote will be held on the BGEID, i.e. the Federal Act on Electronic Identification Services. In Part 1, an overview of data retention under the BGEID was given. In this part, it is presented how personal data is protected. The BGEID provides for a comprehensive concept for the protection of the various categories of data that will be at stake when the E-ID is used. Here is what it says:

- Data minimisation: By selectively using the state-confirmed E-ID, the user can prevent too much usage data from being collected about her. She can limit her use to those use cases with which she can avoid a personal appearance before an authority. These are only a few cases per year. Here, Art. 6 para. 2 revDSG (principle of proportionality) and Art. 6 para. 4 revDSG (obligation of the IdP to delete data when it is no longer necessary; i.e. usually after a few weeks) are helpful. Art. 15 para. 1 lit, k BGEID also helps: The IdP must delete the data stored with him in relation to a person after six months at the latest (maximum period).

- Obligation to keep data in Switzerland: The IdP must have his systems in Switzerland and keep them here. A system transfer abroad or the involvement of sub-service providers abroad is prohibited if they gain access to data in any way. This follows from Art. 13 para. 2 lit. e BGEID.

- Obligation to separate data: The IdP must keep personal identification data, usage data and usage profiles separate from each other. The separation of data must serve the purpose of protecting against attacks. This is explicitly required by Art. 9 para. 3 BGEID.

- Purpose limitation: Art. 9 para. 1 BGEID prohibits the IdP from using personal identification data in accordance with Art. 5 BGEID for purposes other than identification in accordance with BGEID (see the above procedure). He is therefore forbidden, for example, to make assumptions about the user’s surfing history and, of course, even more so, to store records of this in his systems. The IdP is not allowed to do this even if the user would allow him to do so in a user contract. The BGEID thus goes beyond the revDSG, because under the revDSG it would be permissible to write an extension of purpose into the user contract. This is not possible under the BGEID.

- Linking prohibitions: The IdP is prohibited from making other linkages to personal identification data. Likewise, the IdP is prohibited from linking findings from a private user relationship with the user, which may be offered in parallel, with EID data. This also follows from Art. 9 para. 1 BGEID.

- Prohibition of commercialisation: The IDP is prohibited from commercialising the data. For example, he is prohibited from selling the data about the user. It is not prohibited for the IdP to charge a fee for his services. A private IdP does not have to offer the service free of charge. Of course not!

- Prohibition of disclosure: The IdP may not disclose any data to third parties. He may use subcontractors who are based in Switzerland and whom he controls with regard to compliance with the law (namely the FADP and the BGEID) and the other requirements. However, he may not forward data to recipients such as the following: Shareholders, marketing service providers, etc. According to Art. 16 para. 2 BGEID, this prohibition of disclosure explicitly applies to all data concerned: (1) personal identification data, (2) usage profiles as well as (3) all other data that accrue when using the e-ID (thus also usage data). The BGEID thus goes beyond the revDSG, as the latter only provides for prohibitions on the disclosure of personal data requiring special protection (Art. 30 para. 2 lit. c revDSG). It is not at all clear whether there is any personal data worthy of special protection within the scope of the BGEID (but this has been claimed with regard to the facial image). See the discussion below.

“Normal” personal data and personal data requiring special protection

The Swiss Data Protection Act (DPA) distinguishes between “normal” personal data and personal data requiring special protection. Particularly sensitive personal data is data for which people have already been killed (religion, race, skin colour, etc.). Here, one wants to make sure that a state that suddenly becomes corrupt or “evil” does not get hold of such data. There are historical reasons why data is particularly worth protecting. The revised Data Protection Act (expected to come into force in 2022) includes biometric data that uniquely identifies a natural person among the personal data worthy of special protection (Art. 5 lit. c No. 4 revDSG). This refers to data that “sticks to the skin”, as it were (the image of one’s iris, the recording of one’s voice, the scan of one’s fingerprint, the documentation of one’s DNA). Such information is particularly worthy of protection for reasons of fairness: once a link has been created, one would have to “go off the deep end”, as it were, or get rid of the body feature in question in order to break the data link. In comparison, the data processor has an easy game.

Qualification of the facial image

A passport photo can still be called a biometric data record. But it must be pointed out that the passport photo has a different character than, for example, the fingerprint or the iris, which are often also used as examples of biometric data. In view of the fact that a face changes with age, a facial image, for example, cannot be regarded as unchangeable (this is different, for example, with fingerprints or the iris). Also, in everyday life, the facial image is usually not used with as much restraint as would be the case if, for example, fingerprints and the like were involved. Whether a passport photo is also considered particularly worthy of protection can therefore be critically questioned. While this was often affirmed under the current FADP, it can hardly be maintained under the revised FADP in view of the expressly restrictive wording in Art. 5 lit. c No. 4 of the revised FADP; because biometric data are only personal data worthy of special protection if they clearly identify a natural person. If unambiguous should be the same as “one-to-one”, this can certainly be questioned.

Personal data in need of special protection within the scope of the BGEID?

The personal data generated in the context of the E-ID is not personal data with an increased risk potential. It is “normal” personal data throughout. This should also apply to the facial image (which, however, can and must be described as controversial in view of the FADP still in force).

Significance of the discussion on particularly sensitive personal data

The discussion about the facial image and its classification must be put into perspective anyway. Pro memoria: The facial image is used in the scope of the BGEID for the verification of the security levels “substantial” and “high” and is only stored at the IdP for E-ID of the level “high”. Since the BGEID with its legal obligations goes beyond the relevant revDSG, one can ask whether the excitement about the facial image correctly reflects the risk situation. The discussion is more likely to be for effect.

Conclusion

As a result, the personal data contained in the E-ID only harbours a relatively moderate risk potential. At the same time, the protection afforded by the BGEID is significantly more stringent than the protection afforded by data protection law. This is shown by the above analysis. One cannot but come to the following conclusion: The excitement about the BGEID is overblown. The problematisation of the facial image is quite obviously for the purpose of creating public opinion. The BGEID can be approved with a clear conscience.

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!