Algorithms also discriminate – as their programmers tell them to do

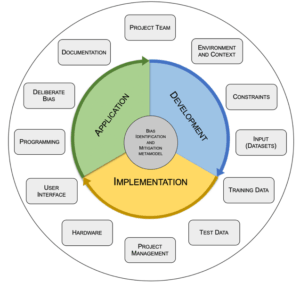

Companies are increasingly using artificial intelligence (AI) to make decisions or to make decisions based on their suggestions. These suggestions can also be discriminatory. To prevent this, we need not only to understand program codes on a technical level, but also to incorporate human thinking and decision-making processes to detect and reduce systematic deception. CO author Thea Gasser proposes tools and procedures for this in her bachelor’s thesis [1], which was recently awarded a prize at the TDWI conference in Munich. Recently, there has been growing concern about unfair decisions made with the help of algorithmic systems that lead to discrimination against social groups or individuals. For example, Google’s advertising system is accused of displaying high-income jobs to predominantly male users. Facebook’s automatic translation algorithm also caused a stir in 2017 when it chose the wrong translation for a user post, leading to police questioning the user in question [2]. Or soap dispensers that do not work for people with dark skin [3]. In addition, there are several known cases of self-driving cars failing to recognise pedestrians or vehicles, resulting in loss of life [4]. Current research aims to map human intelligence onto AI systems. Robert J. Steinberg [5] defines human intelligence as “…mental competence, which consists of the abilities to learn from experience, adapt to new situations, understand and master abstract concepts, and use knowledge to change one’s environment.” To date, however, AI systems lack, for example, the human trait of self-awareness. The systems still rely on human input in the form of created models and selected training data. This implies that partially intelligent systems are heavily influenced by the views, experiences and backgrounds of humans and can thus also exhibit cognitive biases. Bias is defined as “…the act of unfairly supporting or opposing a particular person or thing by allowing personal opinions to influence judgement” [6]. Causes of cognitive distortions in the human thought process and decision-making are information overload, meaninglessness of information, the need to act quickly, or uncertainty about what needs to be remembered later and what can be forgotten [7]. As a result of cognitive biases, people can be unconsciously deceived and may not recognise the lack of objectivity in their conclusions [8] The findings of the co-author’s bachelor thesis on “Bias – A lurking danger that can convert algorithmic systems into discriminatory entities” (1) first showed that biases in algorithmic systems are a source of unfair and discriminatory decisions. Furthermore, the work results in a framework that aims to contribute to AI safety by proposing measures that help to identify and mitigate biases during the development, implementation and application phases of AI systems. The framework consists of a meta-model that includes 12 essential domains (e.g. “Project Team”, “Environment and Content”, etc.) and covers the entire software lifecycle (see Fig. 1). A checklist is available for each of the areas, through the use of which the areas can be considered and analysed in greater depth.

Figure 1: Metamodel of the Bias Identification and Mitigation Framework

As an example, the area “Project Team” is explained in more detail below (see Fig. 2). Knowledge, views and attitudes of individual team members cannot be deleted or hidden, as these are usually unconscious factors due to the different backgrounds and varied experiences of each member. The resulting bias is likely to be carried over into the algorithmic system.

Figure 2: Checklist excerpt for the “Project Team” section of the metamodel

Therefore, measures need to be taken to ensure that the system has the fairness appropriate to the context. It is necessary that there is an exchange among the project members where everyone shares their views and concerns openly, fully and transparently before the system is designed. Misunderstandings, conflict ideas, too much euphoria and unconscious assumptions or invisible aspects can be uncovered in this way. The Project Team Checklist contains the following concrete measures to solve the problems mentioned above: All project members (1) have participated in training on ethics, (2) are aware of the issue of bias that exists in the human decision-making process, (3) know that bias can be reflected in an algorithmic system, and (4) consider the same attributes and factors as most relevant in the system context. The project team (1) reflects representatives from all possible end-user groups, (2) is a cross-functional team with diversity in terms of ethnicity, gender, culture, education, age and socio-economic status, and (3) consists of representatives from the public and private sectors. The co-author’s bachelor thesis includes checklists for all the areas listed in the metamodel. Based on the results of the work, the framework is intended to be an initial framework that can be adapted to the specific needs in a given project context. The proposed approach takes the form of a guideline, e.g. for the members of a project team. Adaptations of the framework can be made based on a defined understanding of system neutrality, which may be specific to the particular application or application domain. If the framework adapted to the specific context is used in a mandatory framework within a project, it is very likely that the developed application will better reflect the neutrality defined by the project team or company. Checking whether the framework has been applied and the requirements met helps to find out whether the system meets the defined neutrality criteria or whether and where action is needed. To adequately address bias in algorithmic systems, overarching and comprehensive governance must be in place in organisations where AI responsibility is taken seriously. Ideally, project members internalise the framework and consider it a binding standard.

References

- Gasser, T. (2019). Bias – A lurking danger that can convert algorithmic systems into discriminatory entitie: A framework for bias identification and mitigation. Bachelor’s Thesis. Degree Programme in Business Information Technology. Häme University of Applied Sciences.

- Cossins, D. (2018). Discriminating algorithms: 5 times AI showed prejudice. Retrieved January 17, 2019.

- Plenke, M. (2015). The Reason This “Racist Soap Dispenser” Doesn’t Work on Black Skin. Retrieved 20 June 2019.

- Levin, S., & Wong, J. C. (2018). Self-driving Uber kills Arizona woman in first fatal crash involving pedestrian. The Guardian. Retrieved February 17, 2019.

- Sternberg, R. J. (2017). Human intelligence. Retrieved June 20, 2019.

- Cambridge University Press. (2019). BIAS | meaning in the Cambridge English Dictionary. Retrieved June 20, 2019.

- Benson, B. (2016). You are almost definitely not living in reality because your brain doesn’t want you to. Retrieved June 20, 2019.

- Tversky, A., & Kahneman, D. (1974). Judgment under Uncertainty. Heuristics and biases. Science, New Series, 185(4157), 1124-1131.

Create PDF

Create PDF

Contributions as RSS

Contributions as RSS Comments as RSS

Comments as RSS

Leave a Reply

Want to join the discussion?Feel free to contribute!